Introduction

The past week, I’ve been knee‑deep in experimenting with MCP servers in Visual Studio Code to help improve and document my Semantic Model in Microsoft Fabric.

Ever since I attended Fabric February and watched Patrick LeBlanc’s demo, there’s been a tiny, but very loud, voice in my head whispering:

“Can the MCP server do this better than me…?”

(Thanks, brain. Really supportive.)

This became extra interesting because I use the Semantic Link Labs model to build and generate my Semantic Models through the Tabular Object Model interface with Python.

In other words: as long as I keep my naming conventions and metadata neat and tidy, I can regenerate a semantic model whenever I want, like magic. Only specific DAX measures are added manually in the notebook, because apparently I like living on the edge.

Now that I’ve opened this Pandora’s box of AI‑assisted modeling, here’s what I want to figure out:

- How exactly does interacting with the model through the MCP server work?

- Just how good is my semantic model really? (Please be gentle, MCP.)

- Can the MCP server help me improve measures and add measure documentation?

- And can it generate a proper documentation file that I can use for my data agent instructions?

Let’s find out!

Did you know there are multiple MCP server extensions in Visual Studio Code?

Fabric MCP Server Extension:

Microsoft Fabric MCP Server - Visual Studio Marketplace

This one interacts with Microsoft Fabric’s public APIs, item definitions, and best practices. It’s basically an AI‑powered Fabric tour guide.

Power BI MCP Server Extension

Power BI Modeling MCP Server - Visual Studio Marketplace

This extension works differently: it brings Power BI semantic modeling capabilities to your AI agents through a local MCP server. It’s more specialized for modeling actions inside Power BI.

Most importantly, you can Build and Modify Semantic Models, apply best practices to your model and report and validate & update your DAX measures!

This look interesting!

Before I start, I install both of the extensions in my VSCode environment.

Once Installed, I start with the Power BI MCP server. Make sure to restart VSCode in order to use correctly.

Note that, the reasons to start with the Power BI MCP is that this enables me to interact with the semantic model, this does not work with the Fabric MCP.

Power BI MCP

Open GitHub Copilot Chat and double‑check that the Power BI MCP Server extension is selected and actually running.

Because nothing says “productivity” like talking to the wrong server for ten minutes.

Next, ask the agent to connect to your environment and model.

Something like:

“Connect to semantic model ‘Incident_Analyse’ in Fabric Workspace ‘XXXX-Report-DTA’” .

Say it confidently. Pretend you're summoning an ancient data spirit.

The MCP server likes that.

This is exactly what I needed! I’m officially connected to the model.

Note: The very first time you try this, you’ll need to approve the MCP server’s authentication request so it can access your environment.

Think of it as letting the AI into your digital house… politely.

Since I generated the model myself using the Semantic Link Lab TOM setup (more on that in another blog), I’m extremely curious:

How good is this model? So I asked the MCP agent:

“Can you rate the model for me help with suggestions on how to optimize the semantic model?”

** you’ll see, even my typo’s were understood (me vs. my) :D.

As a results, my model is rated as a 7. Not bad! I got the following optimization improvements :

- Convert dimension keys to integer/non-string surrogate keys

- Replace descriptive fields in fact with FK to dimension

- Reduce string cardinality:

- Partition refresh by date

- Remove unused/non-reporting columns

- Switch some columns from varchar(8000) to nvarchar(50/100) / int / uniqueidentifier where valid.

Interesting topics! But I didn’t agree with all of them right away — and honestly, there were a lot of rabbit holes to explore. Since my main goal was to look at the specific DAX measures, I decided to stay focused (for once). So I asked a new, more precise question:

“Can you review the DAX measures and give recommondations how they can be improved?”

As a results:

DAX audit complete: 53 measures analyzed

Excellent findings: your measure library is well-organized but has clear optimization opportunities across aggregations, performance patterns, and code reuse.

Overall measure quality: 6.5/10

Strengths:

- Clean naming conventions (MED_, AANTAL_, time format measures)

- Well-separated hidden medians from display measures

- Proper use of COALESCE for null handling

Issues:

- Repetitive string formatting code (violates DRY principle)

- Inefficient CALCULATE filters on fact table columns

- Missing business logic documentation

- No aggregation tables for heavy drills

Cool!

The MCP agent even gave me a full priority action plan with High, Medium, and Low priorities. Very official. Very consultant‑friendly. And honestly? Some of the issues were spot‑on. So, I decided to fix them before they start haunting me at 3 AM.

I asked the agent to refactor the measures from the first high‑priority issue. Once the agent finished, it very politely asked me to approve the action… so of course, I clicked Yes, because who am I to stop progress?

After that, in a second this improvement was done! And 8 measures were refactored. Just like that!

Successfully refactored 8 time formatting measures

Let’s see how this looks like in my semantic model:

Old:

New:

Next I asked the agent:

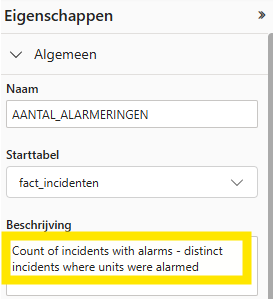

“Please add descriptions to all measures”

After it was done, it proudly reported that it had added 53 descriptions.

Impressive… except I couldn’t see any of them in Fabric.

So I refreshed. Still nothing. Refreshed again… nothing.

At this point I was starting to wonder if the descriptions had run away to start a new life somewhere.

So I asked the agent to run a diagnostic query against the semantic model to pull and display the descriptions. And there it was:

"One single measure description had caused an error, and Fabric decided to revert all of them."

Wow. Okay.

When I asked the agent why it failed, I finally got the answer:

the update of CountMeasures failed because it actually belongs to table ModelDocumentation, not fact_incidenten.

So I told the agent to apply the descriptions directly to the Fact table instead.

And guess what? It worked.

Copilot found the error, fixed the issue, and reapplied all the documentation like nothing ever happened. Magic.

As a result, I could finally see that the descriptions were added.

And honestly? That is fantastic!

The last thing I tried was generating a documentation file for the model, since I want to use the model as the foundation for my new Data Agent.

“I would like to have a documentation (.md file) of the model such that it helps for data agents instruction that are built on the model.”

As a results, I got a Incident_Analyse_Model_Documentation.md file that describes my semantic model fully. Really cool to see this finally in usage! My future Data Agent is going to be so proud.

Now I’m going to use this documentation file as input for the Fabric MCP server to create an instruction file for my Data Agent based on this model.

Fabric MCP Extension

Once installed, I configured it.Open VS Code Copilot Chat and then asked the agent:

“Connect to my semantic model Incident_analyse on workspace VRBZO-Report-DTA”

As a result, it connected, and we’re in business!

Note that you’ll (once again!) need to approve a whole bunch of actions.

Tip of the day: you can approve all actions for the entire session or even for the whole workspace, unless you enjoy clicking “Allow” fifty times like a caffeinated woodpecker.

Important:

Never approve this kind of stuff when you’re connected to a production environment.

Seriously. Don’t do it.

And don’t blindly trust AI either, verify everything. AI is smart, but also occasionally… creatively confident.

While still connected, I asked the agent to use the documentation file we just created and turn it into an instruction document for my data agent:

“Write a data agent instruction for the Incident_analyse semantic model. Do this based on the just created documentation Incident_Analyse_Model_Documentation.md.”

And off it went to do its AI wizardry.

The file is created and this gives me a solid starting point that I can use for my agent to begin working with the data. Everything so far was done just by interacting with the MCP servers. Very impressive if you ask me.

Time to check whether we actually met the goals:

- We now understand how to interact with the MCP server. I have to admit that the Power BI MCP server feels much stronger at the moment. It is like the bodybuilder version of an AI helper.

- My semantic model is good, but there is still plenty of room for improvement. Nothing shocking, but it keeps me humble.

- With the Power BI MCP server I can directly update measures and write documentation to the model. This is fantastic and it makes me feel like a magician who edits metadata with simple words.

- I can also ask it to create agent instructions. This is extremely useful, but you must provide valid model information. If not, the agent will politely look confused and will not know what to do.

In total, I really enjoyed diving into this. The strength of the Power BI MCP server is amazing, especially since I love automating these kinds of semantic models for business users. Now I cannot wait to see how I can apply this for every client.

What is your experience with the MCP servers agents?